The Nuxeo Vision addon provides a gateway to computer vision services. Currently it supports the Google Vision API and Amazon Rekognition API. Other services can be easily integrated as they become available. These external services allow you to understand the content of an image by encapsulating powerful machine learning models. This gateway provides a wide range of features including shape recognition, auto-classification of images, OCR, face detection and more.

Installation and Configuration

This addon requires no specific installation steps. It can be installed like any other package with nuxeoctl command line or from the Marketplace.

To install the Nuxeo Vision Package, you have several options:

- From the Nuxeo Marketplace: install the Nuxeo Vision package.

- From the command line:

nuxeoctl mp-install nuxeo-vision. - From the Admin Center on JSF UI, go to Admin > Update Center > Packages from Nuxeo Marketplace.

Google Vision Configuration

- Configure a Google service account

- Google Cloud Computing invoicing changes from time to time: it is likely that you'll need to activate billing on your account.

- You can generate either an API Key or a Service Account Key (saved as a JSON file)

It is a common Security best practice to use an API key, we recommend avoiding using a service account.

- If you generated an API key, use the

org.nuxeo.vision.google.keyparameter:org.nuxeo.vision.google.key=[your_api_key_goes_here] - If you created a Service Account Key, install it on your server and edit your

nuxeo.confto add the full path to the file:org.nuxeo.vision.google.credential=[path_to_credentials_goes_here]

See Google Documentation about Vision for more information.

AWS Rekognition Configuration

- Configure a key/secret pair in the AWS console

- Check the FAQ to see in which regions the API is available

- Edit

nuxeo.confwith the suitable information:org.nuxeo.vision.default.provider=aws nuxeo.aws.region= nuxeo.aws.accessKeyId= nuxeo.aws.secretKey=

Functional Overview

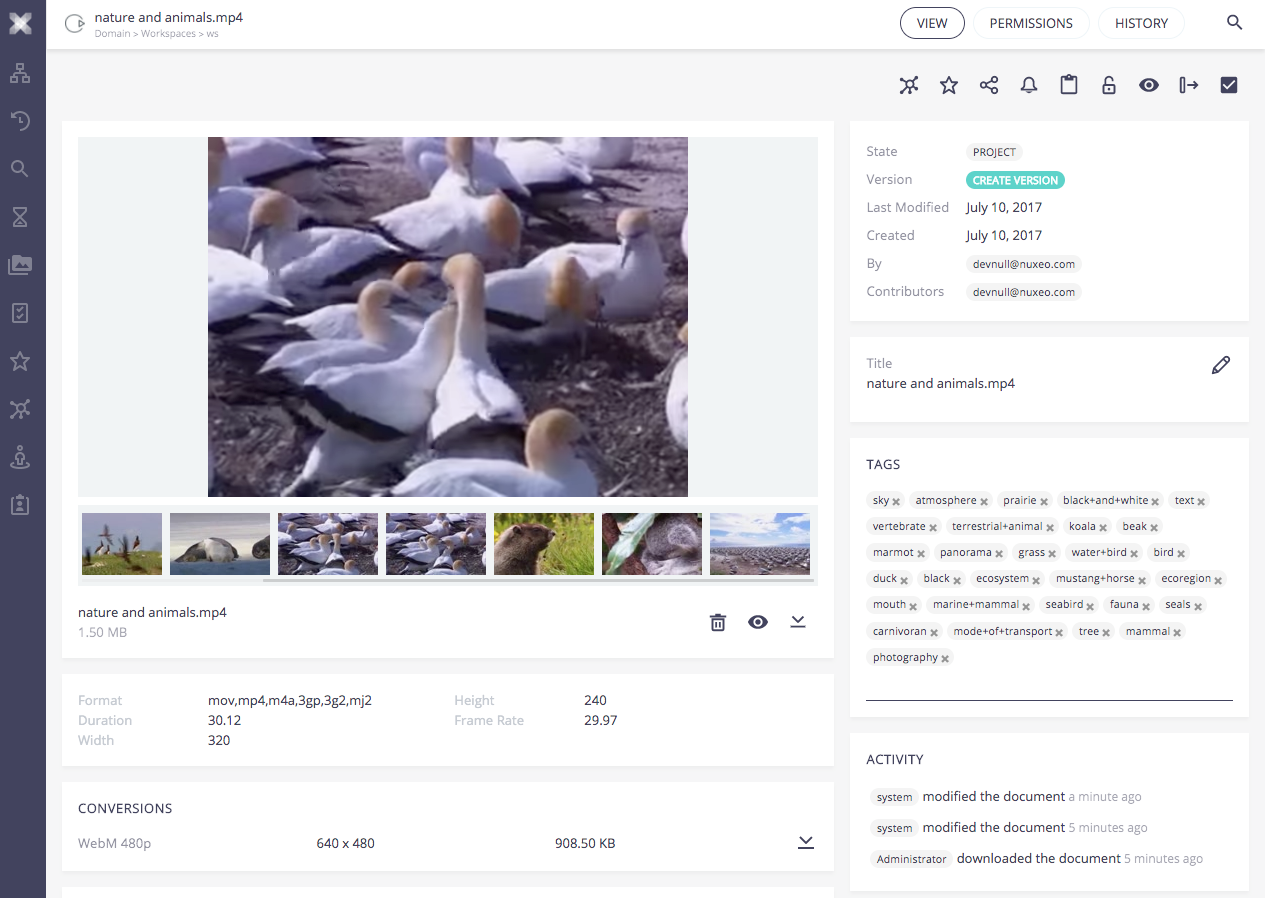

By default, the Computer Vision Service is called every time the main binary file of a picture or video document is updated. Classification labels are stored in the Tags property.

For videos, the platform sends the images of the storyboard to the cloud service.

Customization

Overriding the Default Behavior

The default behavior is defined in two automation chains which can be overridden with an XML contribution.

- Once the addon is installed on your Nuxeo instance, import the

VisionOpoperation definition in your Studio project. See the instructions on the page Referencing an Externally Defined Operation. - Create your automation chains and use the operation inside them. You can use the regular automation chains or Automation Scripting.

Create an XML extension that specifies that your automation chains should be used.

<extension target="org.nuxeo.vision.core.service" point="configuration"> <configuration> <defaultProviderName>${org.nuxeo.vision.default.provider:=}</defaultProviderName> <pictureMapperChainName>MY_PICTURE_CHAIN</pictureMapperChainName> <videoMapperChainName>MY_VIDEO_CHAIN</videoMapperChainName> </configuration> </extension>Deploy your Studio customization.

Disabling the Default Behavior

The default behavior can also be completely disabled with the following contribution:

<extension target="org.nuxeo.ecm.core.event.EventServiceComponent" point="listener">

<listener name="visionPictureConversionChangedListener" class="org.nuxeo.vision.core.listener.PictureConversionChangedListener" enabled="false"></listener>

<listener name="visionVideoChangedListener" class="org.nuxeo.vision.core.listener.VideoStoryboardChangedListener" enabled="false"></listener>

</extension>

Core Implementation

VisionOp Operation

In order to enable you to build your own custom logic, the addon provides an automation operation, called VisionOp. This operation takes a blob or list of blobs as input and calls the Computer Vision service for each one.

The result of the operation is stored in a context variable and is an object of type VisionResponse.

Here is how the operation is used in the default chain:

function run(input, params) {

var blob = Picture.GetView(input, {

'viewName': 'Medium'

});

blob = VisionOp(blob, {

features: ['LABEL_DETECTION'],

maxResults: 5,

outputVariable: 'annotations'

});

var annotations = ctx.annotations;

if (annotations === undefined || annotations.length === 0) {

return;

}

var textAndLabels = annotations[0];

// build tag list

var labels = textAndLabels.getClassificationLabels();

if (labels !== undefined && labels !== null && labels.length > 0) {

var tags = [];

for (var i = 0; i < labels.length; i++) {

var label = labels[i];

var tag = label.getText();

if (tag ===undefined || tag ===null) {

continue;

}

tags.push(tag.replace(/[^A-Z0-9]+/ig,'+'));

}

input = Services.TagDocument(input, {'tags': tags});

}

input = Document.Save(input, {});

return input;

}

Listeners and Events

When using the default implementation, Nuxeo Vision sends events once the processing is done:

- After handing a picture, it sends the

visionOnImageDoneevent - After processing a video (actually, the video storyboard), it sends the

visionOnVideoDoneevent

Listening to these events is a good way to process your own business logic when it depends on the result of the tagging: you are then sure it was processed with no error. If an error occurred during the call to the service, these events are not fired and server.log will contain the error stack.

These events are not sent if automatic processing had been disabled, and they are not sent by the VisionOp operation. If you change the behavior, you may want to send the events (this depends on your configuration)

Google Vision and AWS Rekognition API Limitations

Google Vision API has some known and documented limitations you should be aware of.

You should also regularly check Google Vision API documentation for changes. For example, at the time the API was first released, TIFF was not supported. It supports it as of December 2018, etc.

Amazon Rekognition doesn't provide text-recognition services (OCR). Nuxeo Vision implements only labels detection and safe search.

Also, as these are cloud services, these limitations evolve, change, maybe depending on a subscription, etc.