Files and Blobs

A file is what is commonly handled on user's desktop or other file system. It is a binary content managed under a file system, which means with a location (or locations if fragmented), a path and a name. On the Nuxeo Platform, the concept of file system doesn't exist. The content is stored as a binary stream, and the address of that content is stored in the database. The database has the notion of "Blob", that represents the binary stream and a set of metadata:

- The hash of the binary stream

- The length

- A name

- A mime-type.

At the Core level, blobs are bound to documents via a property of type BlobProperty. So a document can store multiple files that are standalone blob properties, or list of blob properties. When configuring this property, it has to be of type

<xs:element name="test" type="nxs:content"/>

Which corresponds in Nuxeo Studio to selecting "Blob" in the type.

You can then use either the Document.SetBLob operation to set a blob on a given property or the setPropertyValue(String xpath,

Serializable value), of the documentModel object in the Java API. You can also have a look at the BlobAdapter pattern.

Blob Manager and Blob Providers

At lower level, blobs are managed in the Nuxeo Platform by Blob Providers. Most of the time, blob Java objects implement the interface ManagedBlob, that provides the getKey() method. This method returns an id for identifying the blob and this id starts by a prefix that gives the Blob Porvider used to retrieve it.

A Blob Provider is a component that provides an API to read and write binary streams as well as additional services such as:

- Getting associated thumbnail of a binary stream

- Getting a download URI

- Some version management features

- Getting available conversions

- Getting registered applications links

As we will see later in this page, the Nuxeo Platform is shipped with several Blob Provider implementations:

- File System implementation

- S3, Azure, ...

- Google Drive, Dropbox

A Nuxeo Platform instance can make use of several Blob Providers on the same instance. The BlobManager service is in charge of determining for read and write operations which Blob Provider should be used depending on various parameters.

A typical low level Java call for creating a file is the following:

BlobManager blobManager = Framework.getService(BlobManager.class);

String key = blobManager.writeBlob(blob, doc);

The BlobManager service uses the contributed BlobDispatcher class (or the default one) for determining which Blob Provider to use for persisting the blob. It can thus accept the document as a parameter. We will review in a paragraph below how the default BlobDispatcher works.

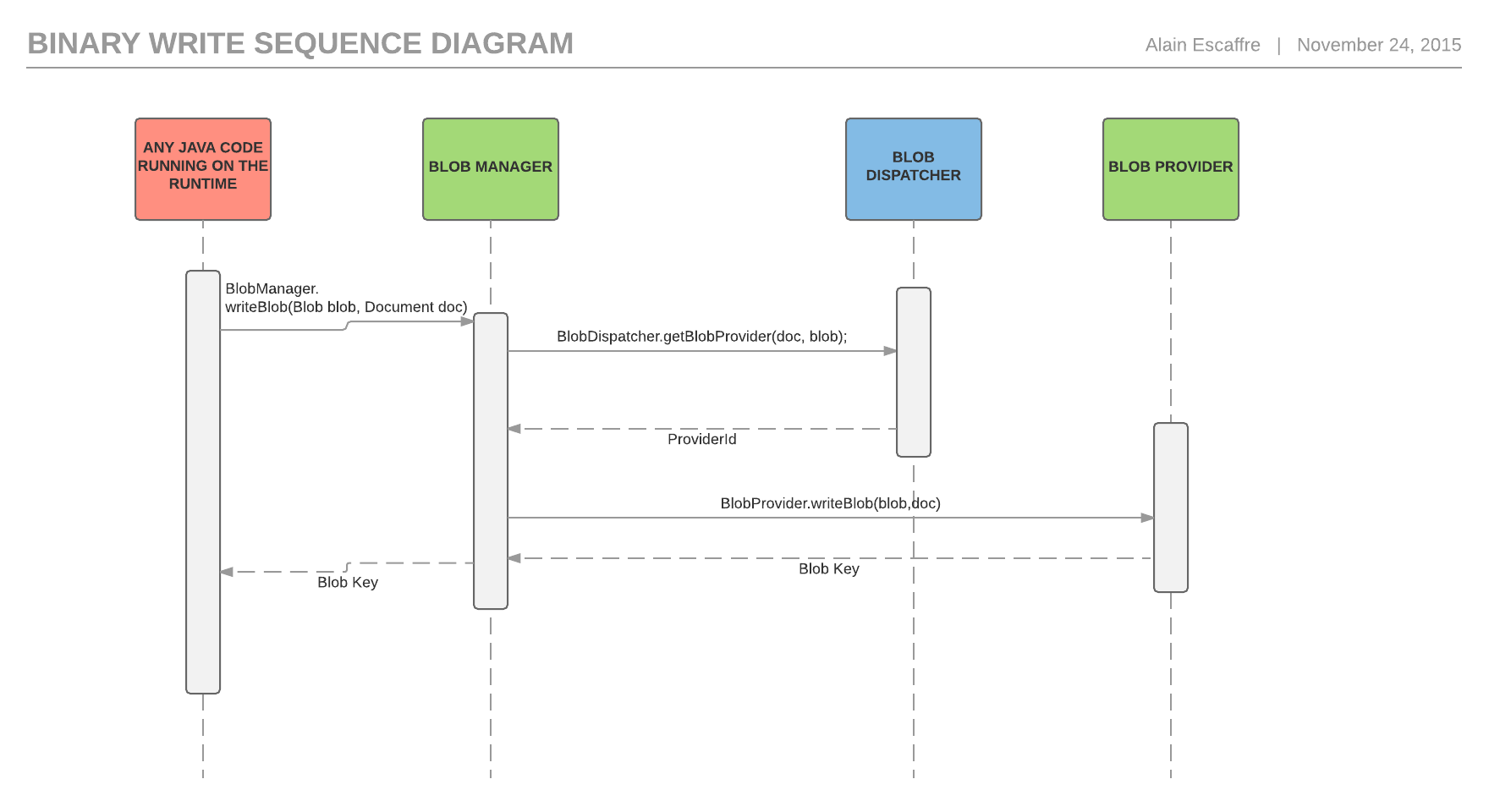

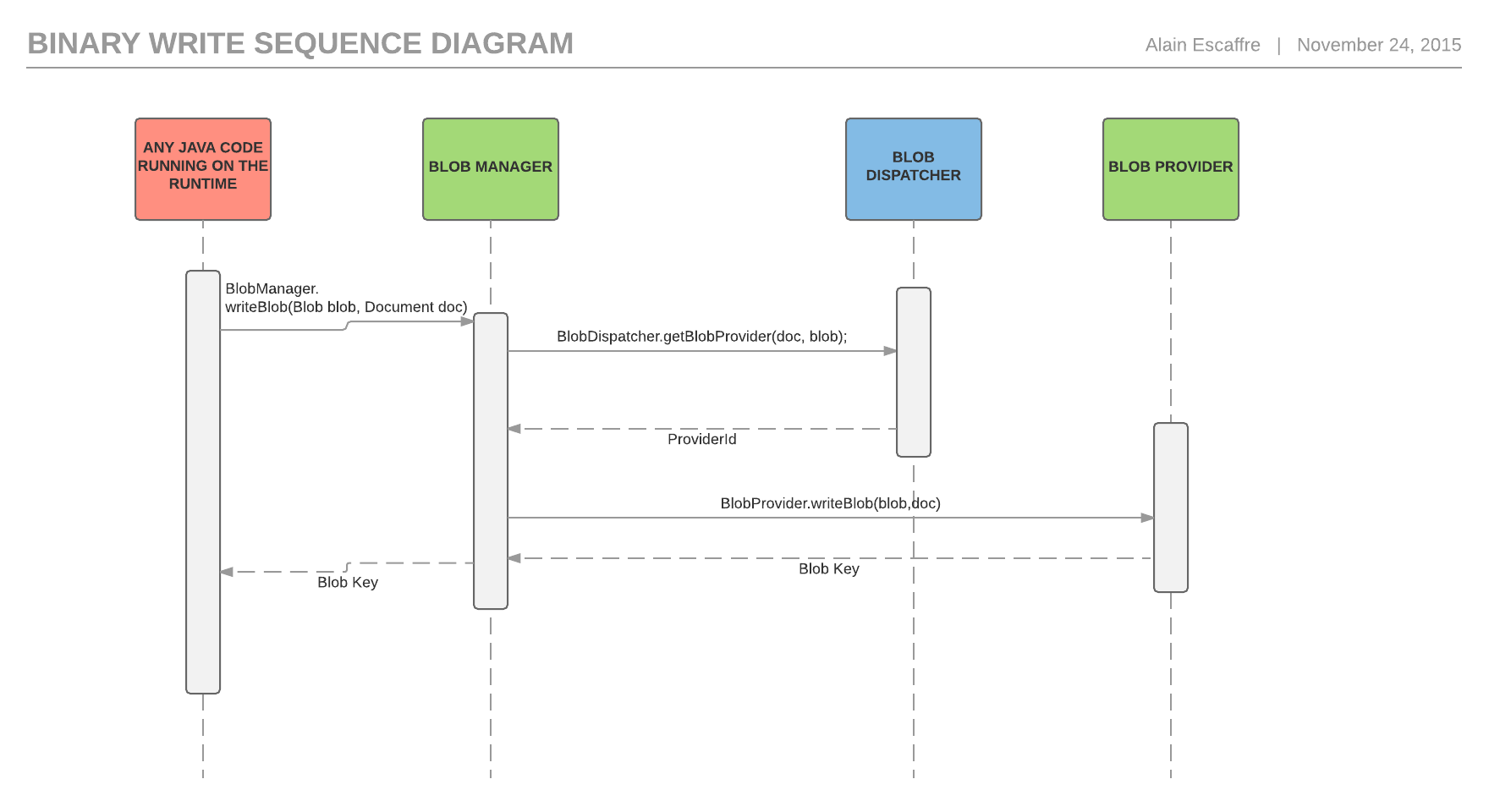

Bellow is a sequence diagram of what happens when writing a binary stream.

Default Blob Provider

Without installing any additional addon, you will find several Blob Provider implementations that you can use.

| Name | Class | Description | Resources |

|---|---|---|---|

| Default | org.nuxeo.ecm.core.blob .binary.DefaultBinaryManager | The default implementation. Stores binaries using their MD5 (or other) hash on the local filesystem. | Configuration |

| Encrypted | org.nuxeo.ecm.core.blob .binary.AESBinaryManager | Stores binaries encrypted using AES form on the local filesystem. | Configuration |

| External File System | org.nuxeo.ecm.core. blob.FilesystemBlobProvider | Reads content stored on an external file system. | Configuration |

| SQL | org.nuxeo.ecm.core. storage.sql.SQLBinaryManager | Stores binaries as SQL BLOB objects in a SQL database. |

To register a new Blob Provider, use the blobprovider extension point with the Java class for your Blob Provider:

<extension target="org.nuxeo.ecm.core.blob.BlobManager" point="configuration">

<blobprovider name="default">

<class>org.nuxeo.ecm.core.blob.binary.DefaultBinaryManager</class>

<property name="path">binaries</property>

</blobprovider>

</extension>

Additional Blob Providers

In addition to the default ones listed above, the following implementations exist and can be used:

| Name | Class | Description | Resources |

|---|---|---|---|

| Azure | org.nuxeo.ecm. blob.azure. AzureBinaryManager | Stores Content on Azure Object Store | - Nuxeo Package - Sources - Configuration |

| Azure CDN | org.nuxeo.ecm. blob.azure. AzureCDNBinaryManager | Stores content on Azure object store read it through Azure CDN | - Nuxeo Package - Sources - Configuration |

| Amazon S3 | org.nuxeo.ecm. core.storage.sql. S3BinaryManager | Stores content on Amazon S3 | - Nuxeo Package - Sources - Configuration |

| JClouds | org.nuxeo.ecm. blob.jclouds. JCloudsBinaryManager | Stores binaries using the Apache jclouds library into a wide range of possible back ends | Sources |

| Google Drive | org.nuxeo.ecm. liveconnect.google.drive. GoogleDriveBlobProvider | Reads content from Google Drive | - Nuxeo Package - Sources |

| Dropbox | org.nuxeo.ecm.- liveconnect.dropbox. DropboxBlobProvider | Reads content from Dropbox | - Nuxeo Package - Sources |

| GridFS | org.nuxeo.ecm. core.storage.mongodb.blob. GridFSBinaryManager | Reads and writes content into MongoDB Binary Manager | - Nuxeo Package - Sources - Configuration |

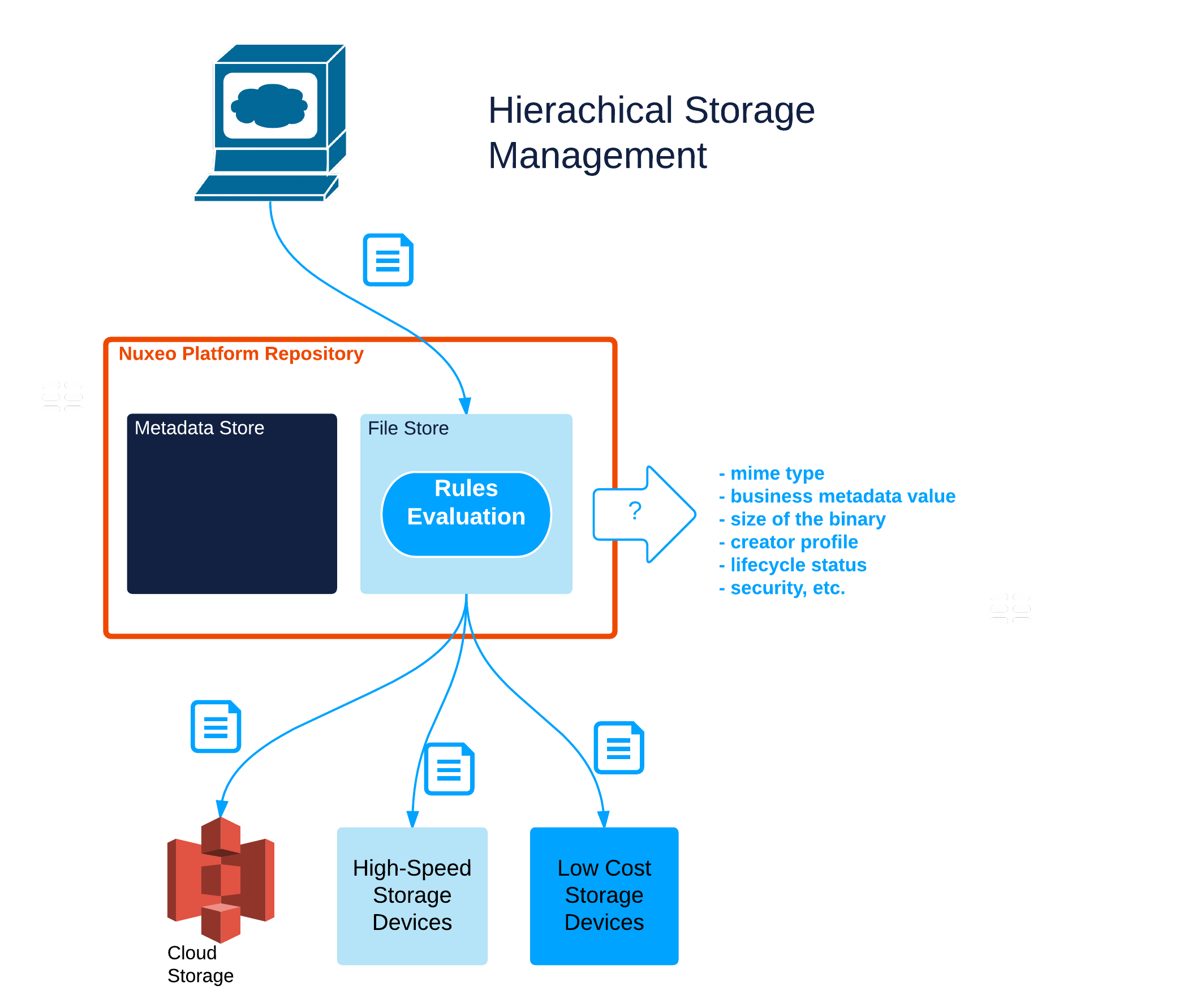

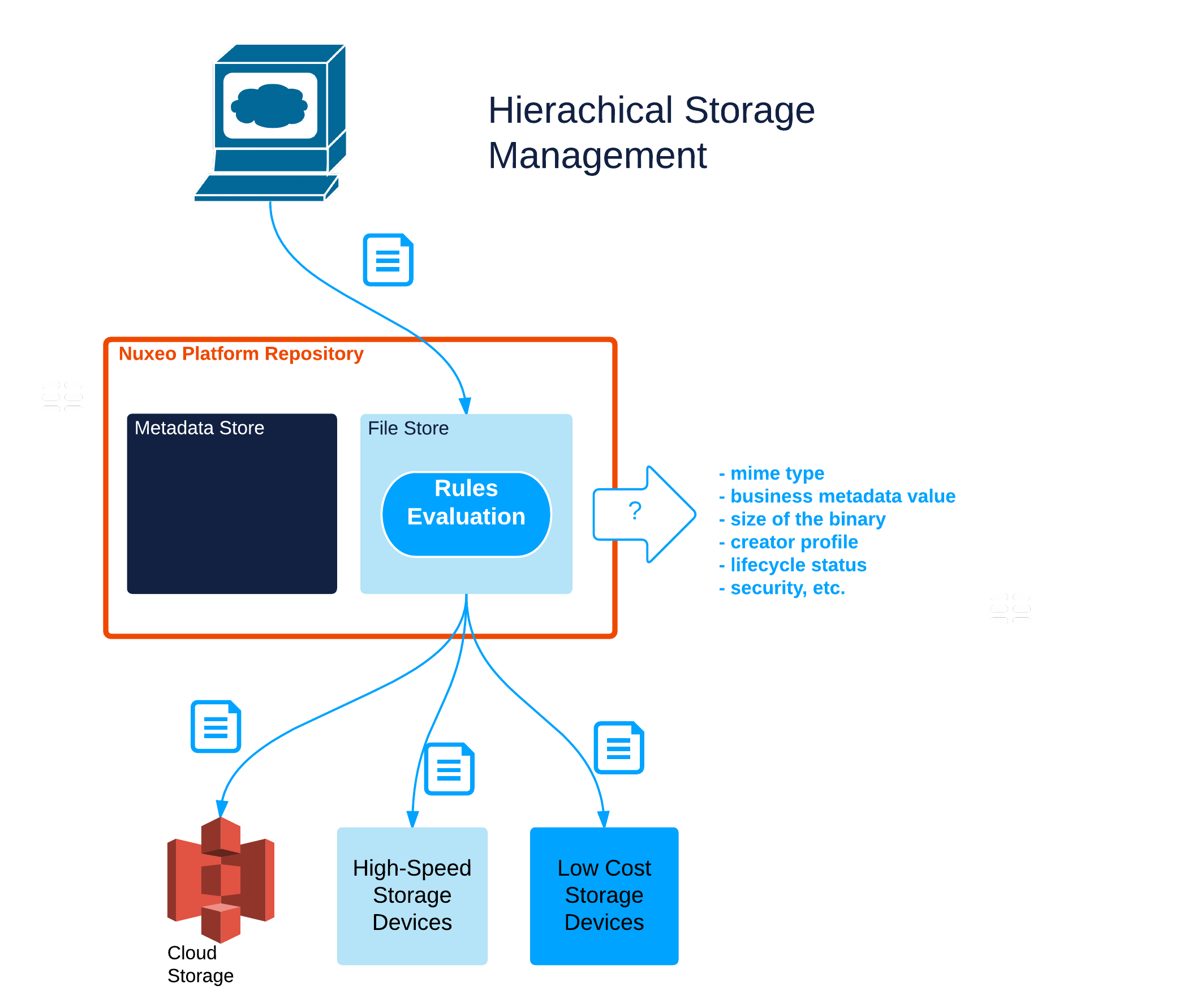

Blob Dispatcher and HSM

The Blob Manager allows to enforce typical HSM (Hierarchical Storage Management) behavior as illustrated in the high level schema below:

This is doable thanks to the BlobDispatcher class.

The role of the blob dispatcher is to decide, based on a blob and its containing document, where the blob's binary is actually going to be stored. The Nuxeo Platform provides a default blob dispatcher (org.nuxeo.ecm.core.blob.DefaultBlobDispatcher) that is easy to configure for most basic needs. But it can be replaced by a custom implementation if needed.

Without specific configuration, the DefaultBlobDispatcher stores a document's blob's binary in a blob provider with the same name as the document's repository name.

Advanced dispatching configuration are possible using properties. Each property name is a list of comma-separated clauses, with each clause consisting of a property, an operator and a value. The property can be a document XPath, ecm:repositoryName , or, to match the current blob being dispatched, blob:name , blob:mime-type , blob:encoding , blob:digest or blob:length . Comma-separated clauses are ANDed together. The special name default defines the default provider, and must be present. Available operators between property and value are =, !=, <, and >.

For example, all the videos could be stored somewhere, the documents from a secret source in an encrypted area, and the rest in a third location. To do this, you would need to specify the following:

<extension target="org.nuxeo.ecm.core.blob.BlobManager" point="configuration">

<blobdispatcher>

<class>org.nuxeo.ecm.core.blob.DefaultBlobDispatcher</class>

<property name="dc:format=video">videos</property>

<property name="blob:mime-type=video/mp4">videos</property>

<property name="dc:source=secret">encrypted</property>

<property name="default">default</property>

</blobdispatcher>

</extension>

This assumes that you have three blob providers configured, the default one and two additional ones, videos and encrypted. For example you could have:

<extension target="org.nuxeo.ecm.core.blob.BlobManager" point="configuration">

<blobprovider name="videos">

<class>org.nuxeo.ecm.core.blob.binary.DefaultBinaryManager</class>

<property name="path">binaries-videos</property>

</blobprovider>

<blobprovider name="encrypted">

<class>org.nuxeo.ecm.core.blob.binary.AESBinaryManager</class>

<property name="key">password=secret</property>

</blobprovider>

</extension>

The default DefaultBlobDispatcher class can be replaced by your own implementation.

Download Service

All the code of the platform that performs a download (for WebDav and CMIS APIs, for custom actions in the UI, for the main download servlet) makes use of the same factorized Java code: the download service. A typical download session is like this:

DownloadService downloadService = Framework.getService(DownloadService.class); downloadService.downloadBlob(req, resp, doc, xpath, null, filename, "download");

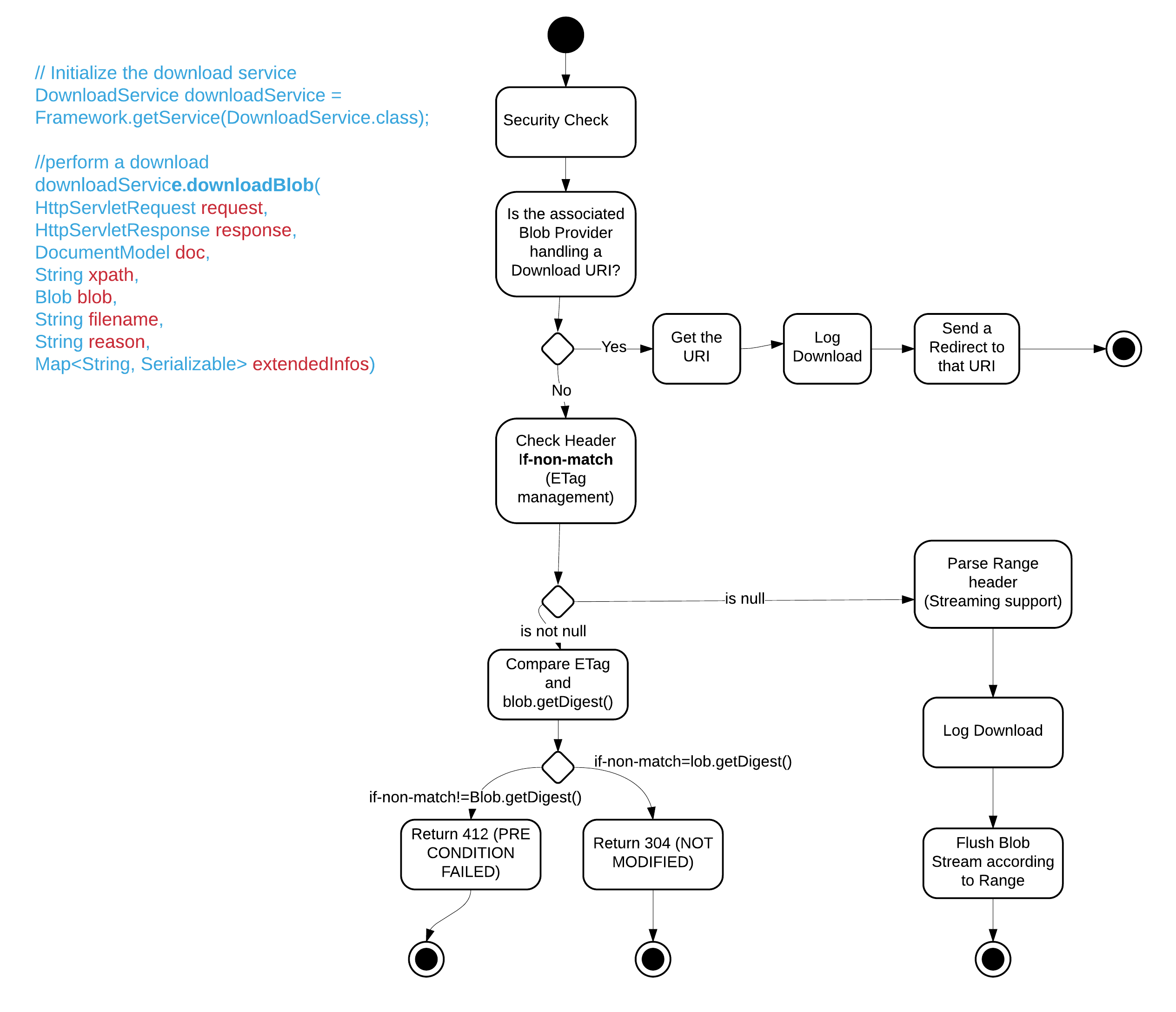

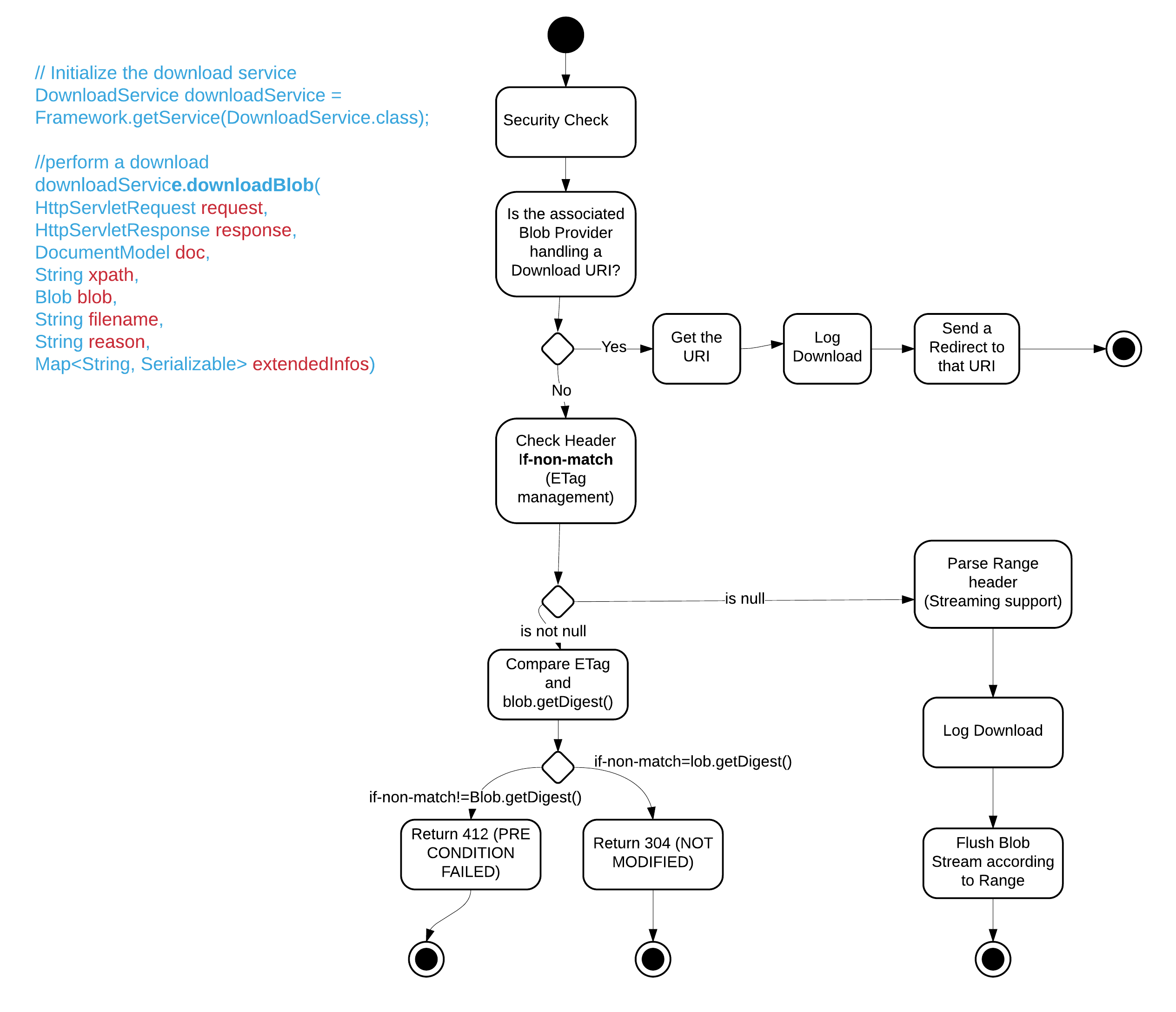

The download service handles the call to the blobManager, logs all download in the audit and also allows to contribute some security rules for authorizing or refusing download of blobs depending on the doc, the filename, the user, the rendition name, etc. The following activity diagram gives an idea of the behavior of what happens when downloading a file:

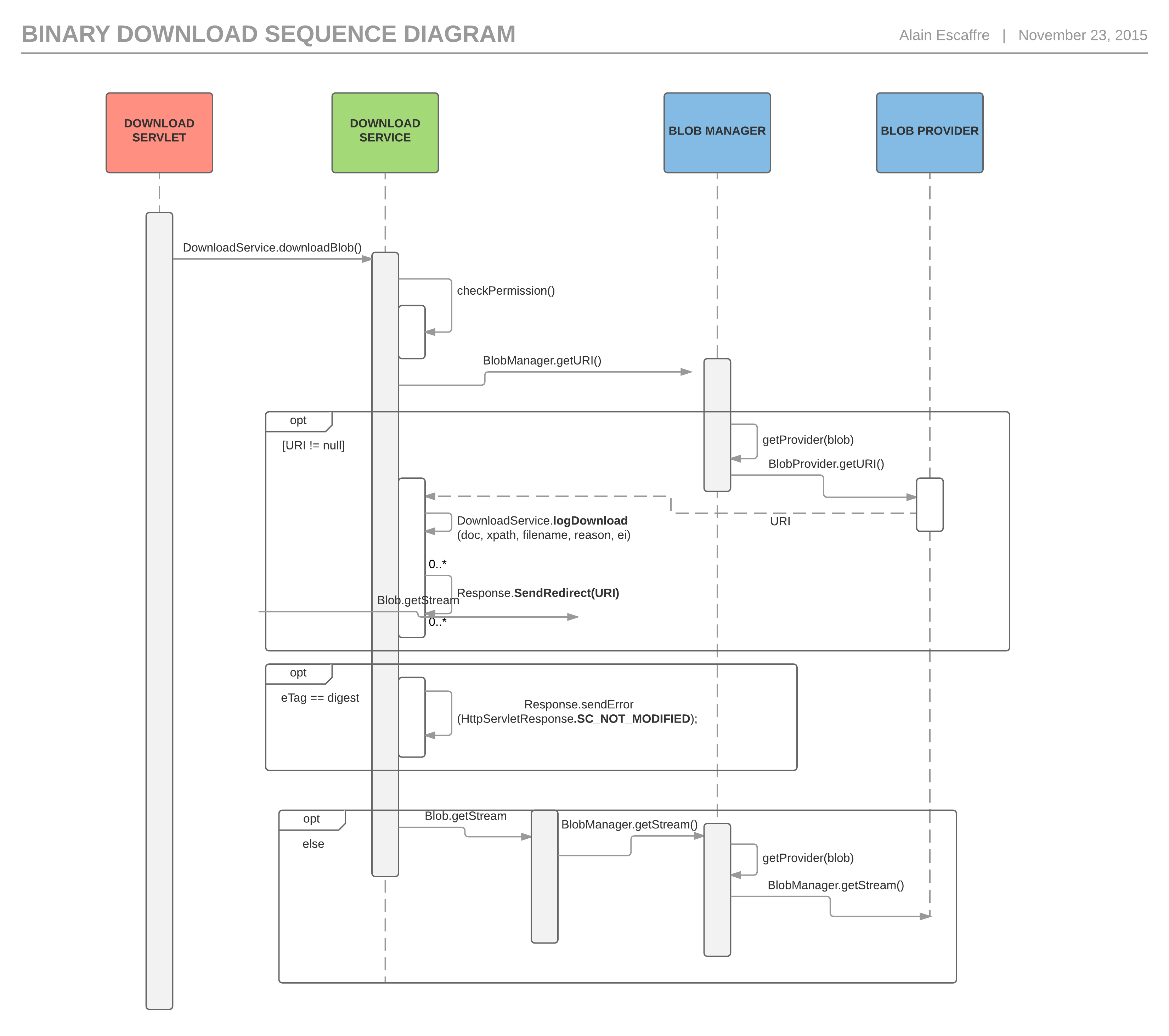

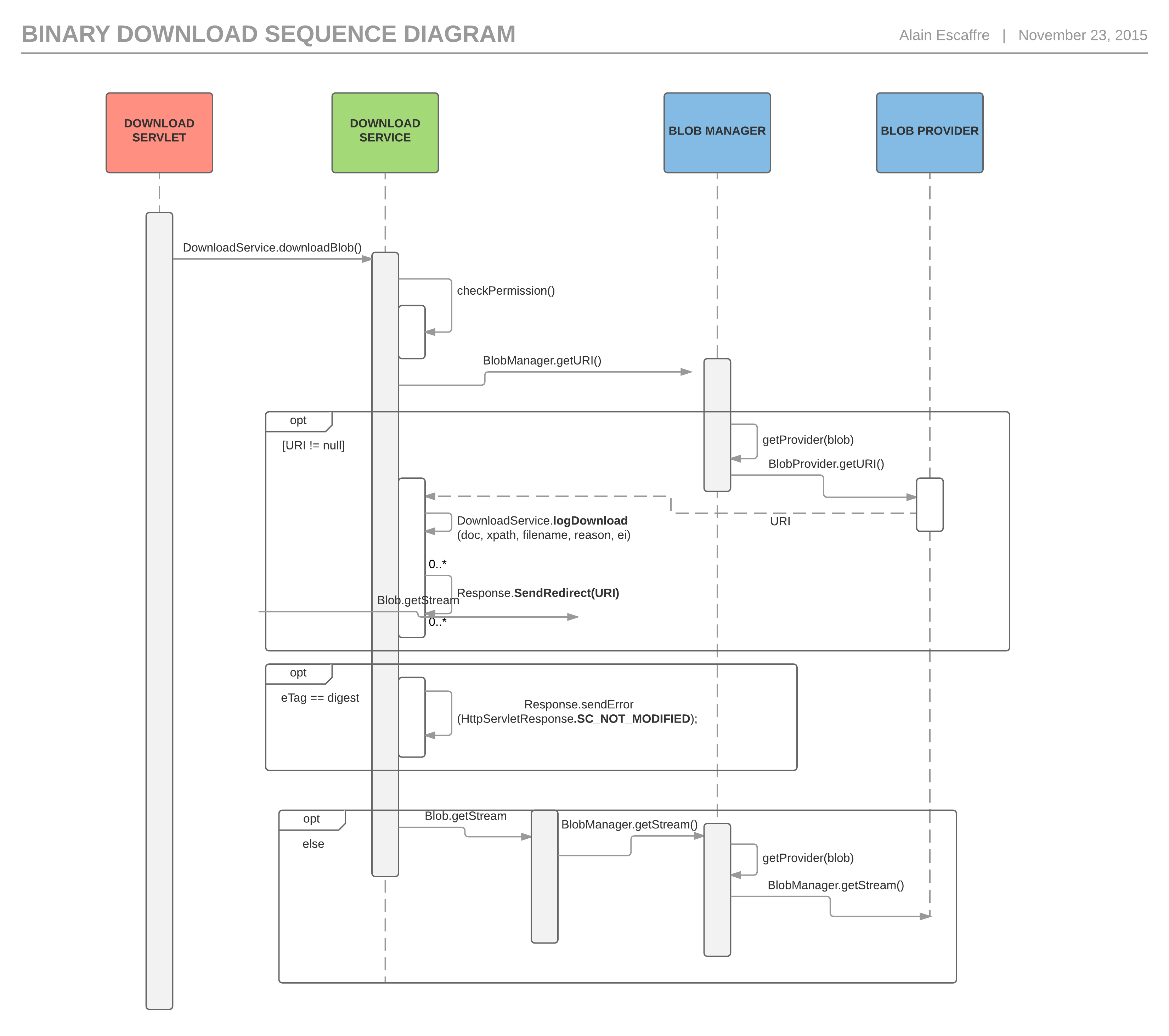

That can also be view as a sequence diagram to better understand each actor's roles:

Download URL format

The default URL patterns for downloading files from within the JSF environment are:

http://NUXEO_SERVER/nuxeo/nxfile/{repository}/{uuid}/blobholder:{blobIndex}/{fileName}http://NUXEO_SERVER/nuxeo/nxfile/{repository}/{uuid}/{propertyXPath}/{fileName}

Where:

nxfileis the download servlet. Note thatnxbigfileis also accepted for compatibility with older versions.repositoryis the identifier of the target repository.uuidis the uuid of the target document.blobIndexis the index of the Blob inside theBlobHolderadapter corresponding to the target Document Type,starting at 0:blobholder:0,blobholder:1.propertyXPathis the xPath of the target Blob property inside the target document. For instance:file:content,files:files/0/file.fileNameis the name of the file as it should be downloaded. This information is optional and is actually not used to do the resolution.?inline=trueis an optional parameter to force the download to be made withContent-Disposition: inline. This means that the content will be displayed in the browser (if possible) instead of being downloaded.

Here are some examples:

- The main file of the document:

http://NUXEO_SERVER/nuxeo/nxfile/default/776c8640-7f19-4cf3-b4ff-546ea1d3d496 - The main file of the document with a different name:

http://NUXEO_SERVER/nuxeo/nxfile/default/776c8640-7f19-4cf3-b4ff-546ea1d3d496/blobholder:0/mydocument.pdf - An attached file of the document:

http://NUXEO_SERVER/nuxeo/nxfile/default/776c8640-7f19-4cf3-b4ff-546ea1d3d496/blobholder:1 - A file stored in the given property:

http://NUXEO_SERVER/nuxeo/nxfile/default/776c8640-7f19-4cf3-b4ff-546ea1d3d496/myschema:content - A file stored in the given complex property, downloaded with a specific filename:

http://NUXEO_SERVER/nuxeo/nxfile/default/776c8640-7f19-4cf3-b4ff-546ea1d3d496/files:files/0/file/myimage.png - The main file of the document inside the browser instead of being downloaded:

http://NUXEO_SERVER/nuxeo/nxfile/default/776c8640-7f19-4cf3-b4ff-546ea1d3d496?inline=true

For Picture document type, a similar system is available to be able to get the attachments depending on the view name:

http://NUXEO_SERVER/nuxeo/nxpicsfile/{repository}/{uuid}/{viewName}:content/{fileName}

where, by default, viewName can be Original, OriginalJpeg, Medium, Thumbnail.

Default Blob Provider

The default Blob Provider implementation is based on a simple filesystem: considering the storage principles, this is safe to use this implementation even on a NFS like filesystem (since there is no conflicts).

Binary getBinary(InputStream in);

Binary getBinary(String digest);

As you can see, the methods do not have any document related parameters. This means the binary storage is independent from the documents:

- Moving a document does not impact the binary stream.

- Updating a document does not impact the binary stream.

In addition, the streams are stored using their digest. Thanks to that:

BlobStoredoes automatically manage de-duplication.BlobStorecan be safely snapshoted (files are never moved or updated, and they are only removed via aGarbageCollectionprocess).

External File System

We provide a Blob Provider for being able to reference files that would be stored on an external file system that the Nuxeo server can reach: org.nuxeo.ecm.core.blob.FilesystemBlobProvider

The root path is a property of the contribution:

<extension target="org.nuxeo.ecm.core.blob.BlobManager" point="configuration">

<blobprovider name="fs">

<class>org.nuxeo.ecm.core.blob.FilesystemBlobProvider</class>

<property name="root">/opt/nuxeo/nxserver/blobs</property>

<property name="preventUserUpdate">true</property>

</blobprovider>

</extension>

The preventUserUpdate property will be used by the UI framework for not proposing to the user the ability to update. Such a blob provider can be used when creating a document with the following code:

BlobInfo blobInfo = new BlobInfo();

blobInfo.key = "/opt/nuxeo/nxserver/blobs/foo/bar.pdf";

blobInfo.mimeType = "application/pdf";

BlobManager blobManager = Framework.getService(BlobManager.class);

Blob blob = ((FilesystemBlobProvider) blobManager.getBlobProvider("fs")).createBlob(blobInfo);

Internally the blob will then be stored in the database with a key of fs:foo/bar.pdf .

Encryption

A common question regarding the Blob Manager is the support for encryption. See Implementing Encryption for more on the configuration options.

AES Encryption

Since Nuxeo Platform 6.0, it's possible to use a Blob Provider that encrypts file using AES. Two modes are possible:

- A fixed AES key retrieved from a Java KeyStore

- An AES key derived from a human-readable password using the industry-standard PBKDF2 mechanism.

While the files are in use by the application, a temporary file in clear is created. It is removed as soon as possible.

Built-in S3 Encryption

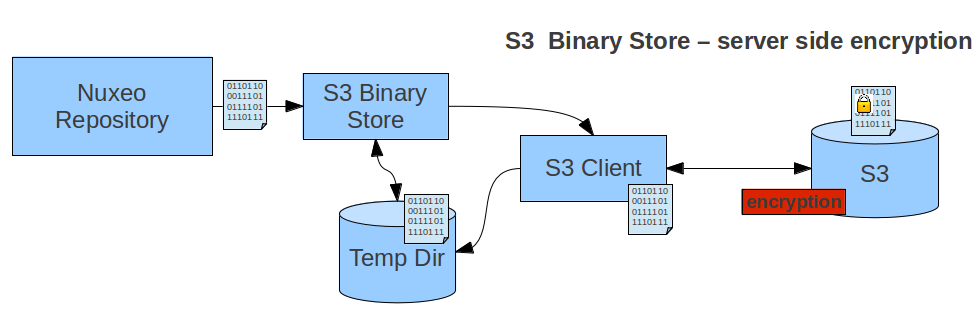

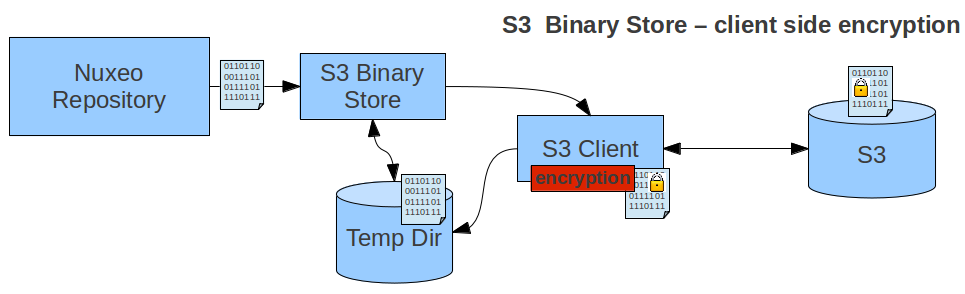

When using the Amazon S3 Online Storage package, the AWS S3 client library automatically supports both client-side and server-side encryption:

With server-side encryption, the encryption is completely transparent.

In client-side encryption mode, Nuxeo Platform manages the encrypt/decrypt process using the AWS S3 client library. The local temporary file is in clear.