Deployment Options

We will present different Nuxeo reference architectures, from the most compact to the most complex, allowing you to provide a full high availability and fault tolerant Nuxeo architecture. The composition and limitation of each architecture are presented in each case.

General Considerations

Applications on the Same Machine

A frequently asked question is whether some applications can be merged on the same machine or not. The answer is yes! We will show such an option here and explain the design choices.

Let's see how applications can be merged.

- The load balancers are usually deployed on separate machines from where the Nuxeo server nodes will be, as otherwise stopping a Nuxeo node could have consequences on serving the requests.

- On the machines where Nuxeo server nodes will be installed, a reverse proxy can be installed as well. It is lightweight, and having a reverse proxy for each Nuxeo node makes sense: if it fails for some reason, only the Nuxeo node behind it will be affected.

- Elasticsearch nodes have to be installed on dedicated machines for performance reasons. Elasticsearch uses half of the machine's memory for its heap and half for the system, and is not designed to share memory with another application using the JVM.

- Kafka cluster is better on dedicated machines for isolation purpose.

- A Zookeeper node is a lightweight, component and can be installed on the Nuxeo nodes.

Deploying in Cloud or Container Based Deployment

This diagram translates perfectly to an on-premise deployment using a container-based technology like docker and we provide a ready-to-use docker image for Nuxeo Server.

Nuxeo Platform also makes it easy to deploy in the Cloud since:

- We are standard-based,

- The pluggable component model (extension points) allows you to easily change backend implementation when needed.

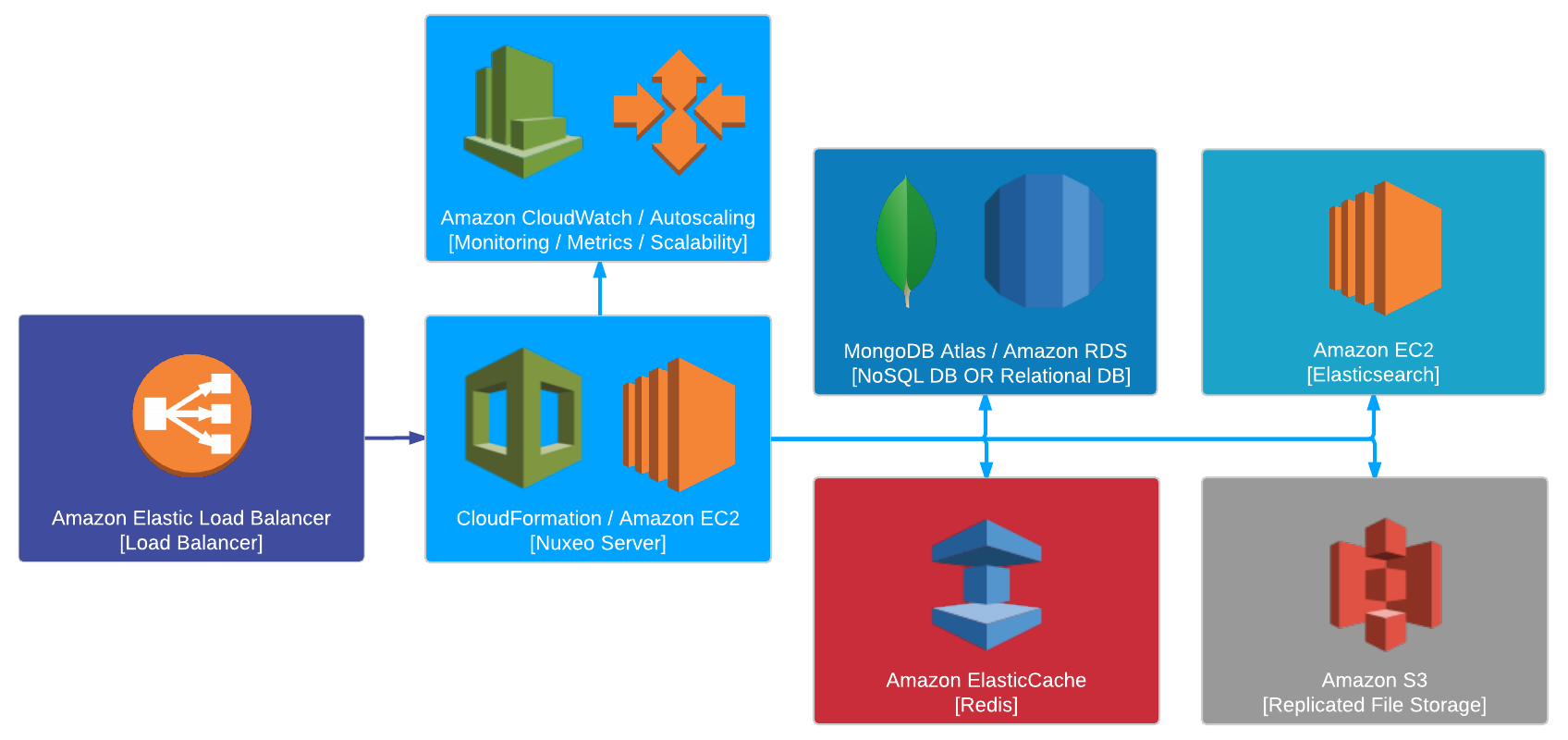

For example, considering Amazon AWS as a possible Cloud infrastructure provider:

- The AWS ELB would be used for load balancing.

- Amazon OpenSearch Service (successor to Amazon Elasticsearch Service) or Elastic Cloud can be used as managed search service. Another option is to manually setup an Elasticsearch cluster using EC2 nodes.

- Amazon Managed Streaming for Kafka (MSK) is an option for Kafka if it is available in your AWS region.

- Database can be handled through Amazon RDS, this is a native plug as there is nothing specific to Amazon in this case. MongoDB Atlas is also an option for a hosted MongoDB cluster.

- An Amazon S3 bucket can be used for replicated file storage. Our BinaryManager is pluggable and we use it to leverage the S3 Storage capabilities.

The same idea is true for all the Cloud specific services like provisioning and monitoring. We try to provide everything so that the deployment in the target IaaS is easy and painless:

- Nuxeo is packaged (among other options) as Debian packages or a docker image:

- We can easily setup Nuxeo on top of Amazon Machine Images

- We can use CloudFormation and we provide a template for it

- Nuxeo exposes its metrics via JMX:

- CloudWatch can monitor Nuxeo

- We can use autoscaling